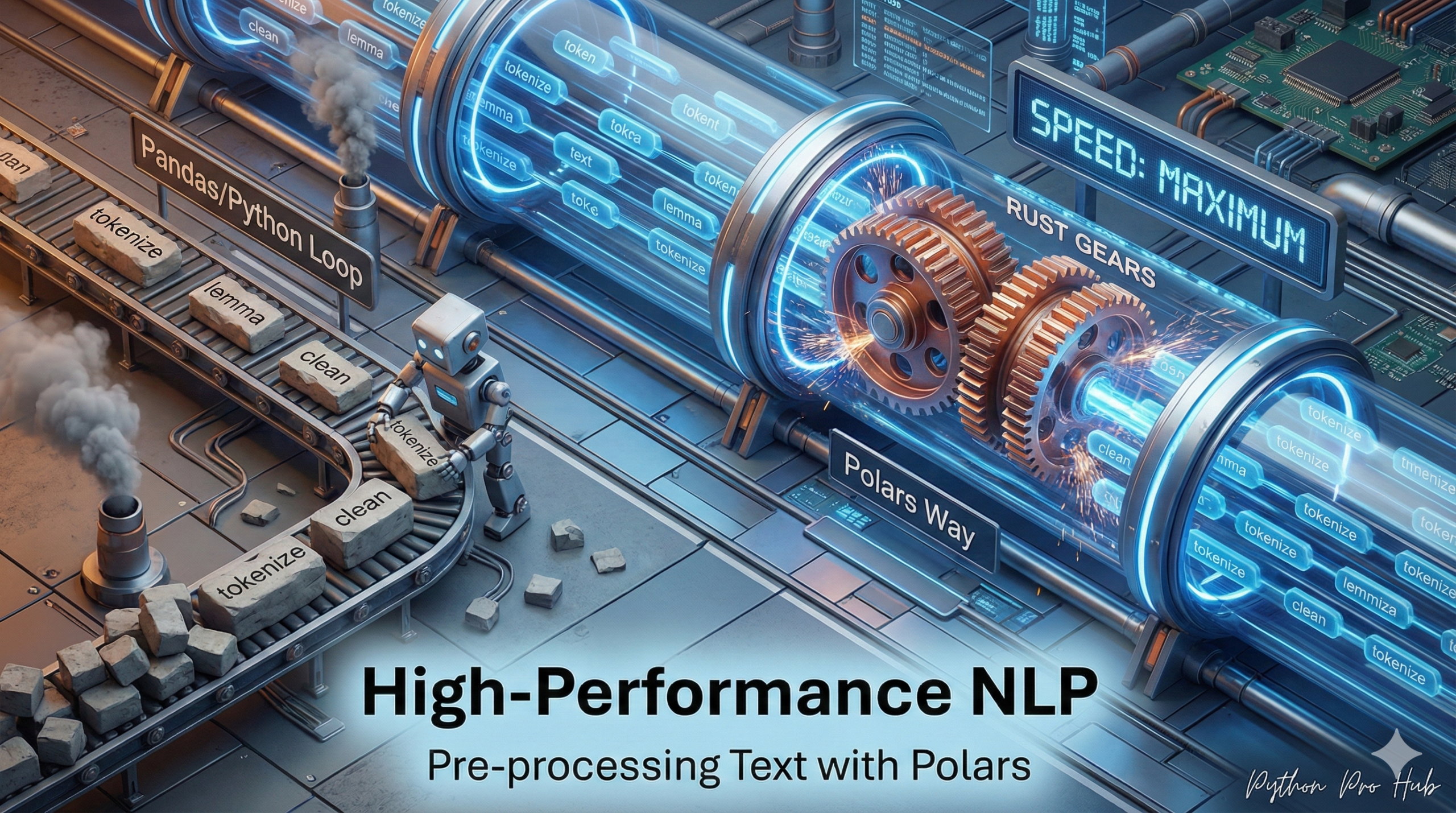

When preparing text data for an AI model, you’re often working with millions of rows. For this reason, many practitioners are interested in Polars NLP Pre-processing for both efficiency and scalability. Using pandas.apply() is a major bottleneck—it’s slow and single-threaded.

Polars performs these text operations in parallel, making it 10-100x faster. Let’s clean a dataset using the Polars Expression API.

The Goal

We want to take messy user reviews and turn them into clean “tokens” (words) ready for a model.

import polars as pl

import re

df = pl.DataFrame({

"review": [

"I LOVE this product! 10/10",

"It was... okay? Not bad.",

"Worst item ever. DO NOT BUY!!",

]

})The Polars “Chained” Expression

Let’s do all our cleaning in one single, optimized command.

- Convert to lowercase.

- Remove all punctuation and numbers.

- Split the clean sentence into a list of words.

clean_df = df.with_columns(

pl.col("review")

.str.to_lowercase()

.str.replace_all(r"[^a-z\s]", "") # Regex: Keep only letters and spaces

.str.split(by=" ")

.alias("tokens")

)

print(clean_df)Output:

shape: (3, 2) ┌───────────────────────────┬──────────────────────────────────┐ │ review ┆ tokens │ │ --- ┆ --- │ │ str ┆ list[str] │ ╞═══════════════════════════╪══════════════════════════════════╡ │ I LOVE this product! 10/10┆ ["i", "love", "this", "product",… │ │ It was... okay? Not bad. ┆ ["it", "was", "okay", "not", "ba… │ │ Worst item ever. DO NOT B…┆ ["worst", "item", "ever", "do", … │ └───────────────────────────┴──────────────────────────────────┘

This is the modern, high-speed way to prepare text data. This tokens column is now ready to be fed into a Hugging Face Tokenizer or a classic TfidfVectorizer.

Key Takeaways

- Preparing text data for AI models requires handling millions of rows efficiently.

- Polars NLP Pre-processing is significantly faster than pandas, as it operates in parallel up to 100x.

- The goal is to clean messy user reviews into organized tokens suitable for models.

- Using the Polars Expression API allows for streamlined cleaning in a single command.

- The cleaned tokens are ready for use with Hugging Face Tokenizer or TfidfVectorizer.