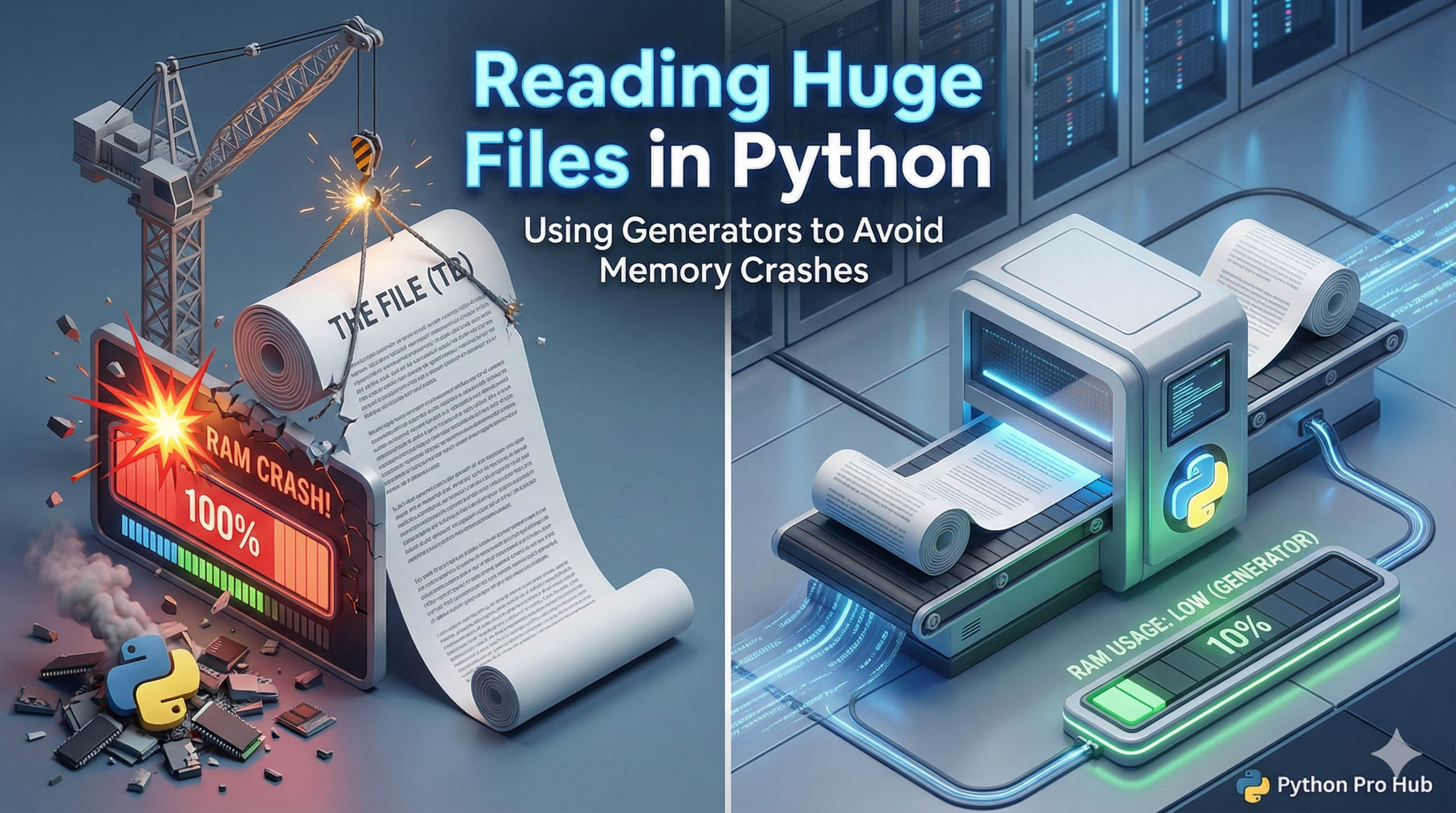

We learned about Generators earlier. Now let’s use them for a real-world problem: Big Data. One common challenge is reading huge files with Python efficiently.

Imagine you have a 50GB log file. If you try this standard beginner approach, your computer will crash:

# DON'T DO THIS with big files!

with open("massive_log.txt", "r") as f:

# This tries to load ALL 50GB into RAM at once

lines = f.readlines()

for line in lines:

process(line)The Generator Solution

Python’s file object is already a generator! You don’t even need to write a special function. You just need to loop over the file object directly.

# DO THIS instead

with open("massive_log.txt", "r") as f:

# The file object 'f' yields one line at a time efficiently

for line in f:

process(line)This uses almost zero memory, whether the file is 5MB or 5TB.

Writing a Custom Chunk Reader

Sometimes a “line” isn’t the right unit. Maybe you want to read in 1MB chunks.

def read_in_chunks(file_object, chunk_size=1024*1024):

"""Lazy function (generator) to read a file piece by piece."""

while True:

data = file_object.read(chunk_size)

if not data:

break

yield data

with open("massive_video.mp4", "rb") as f:

for chunk in read_in_chunks(f):

process(chunk)