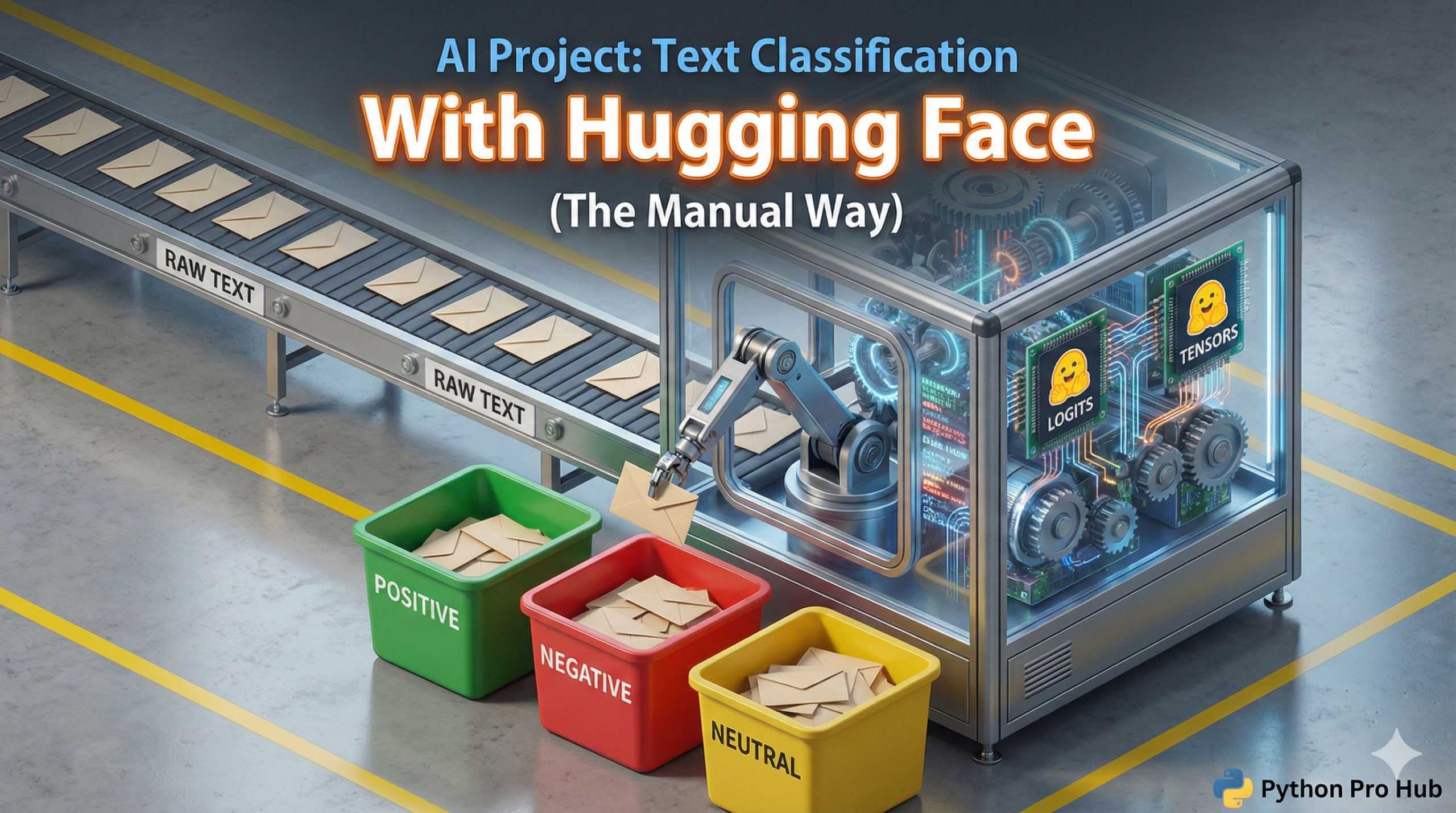

The Hugging Face pipeline is great for simple tasks, and it’s particularly well-known for Hugging Face Text Classification. But what if you need the raw numbers (logits)? Or want to fine-tune a model?

You must load the Tokenizer and Model separately. Let’s rebuild our sentiment analyzer the “pro” way.

Step 1: Install & Load

pip install transformers torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification import torch # This is a popular model trained for sentiment model_name = "distilbert-base-uncased-finetuned-sst-2-english" # 1. Load the Tokenizer tokenizer = AutoTokenizer.from_pretrained(model_name) # 2. Load the Model model = AutoModelForSequenceClassification.from_pretrained(model_name)

Step 2: Tokenize and Predict

text = "This movie was one of the best I've ever seen."

# 1. Tokenize the text (convert to numbers)

inputs = tokenizer(text, return_tensors="pt")

# 2. Feed the numbers into the model

with torch.no_grad(): # (Good practice: disables gradient calculation)

outputs = model(**inputs)

# 3. Get the raw "logits" (the model's direct output)

logits = outputs.logits

print(f"Raw Logits: {logits}")

# Output: tensor([[-4.27, 4.68]])Step 3: Interpret the Results

The logits are the raw scores. We need to convert them to probabilities (0% to 100%) using softmax.

# 4. Convert logits to probabilities

predictions = torch.nn.functional.softmax(logits, dim=-1)

print(f"Probabilities: {predictions}")

# Output: tensor([[6.25e-08, 1.00e+00]])

# 5. Get the winning class

predicted_class_id = torch.argmax(predictions).item()

predicted_label = model.config.id2label[predicted_class_id]

print(f"Prediction: {predicted_label}")

# Output: Prediction: POSITIVEThis gives you 100% control over the AI’s inputs and outputs, which is essential for building custom applications.